What Makes Anthropic Different?

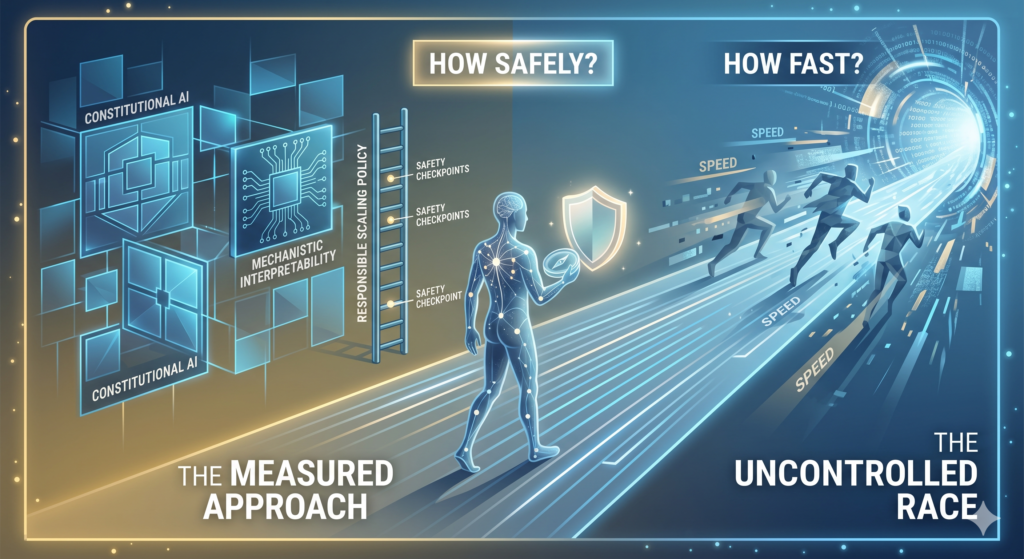

In a world racing toward artificial intelligence, one lab is asking a different question — not just “how fast?” but “how safely?”

Every major technology company today claims to be working on AI. But Anthropic was founded on a different premise: that building powerful AI without making safety the core mission is a gamble the world cannot afford.

Founded in 2021 by Dario Amodei, Daniela Amodei, and a group of former OpenAI researchers, Anthropic was born out of concern — concern that the pace of AI development was outrunning the science of making it safe. That founding anxiety shapes everything the company does.

Safety isn’t a department. It’s the mission.

At most tech companies, safety is a team — a group of researchers working alongside product and engineering. At Anthropic, safety is the reason the company exists. This isn’t a branding choice. It’s reflected in how the company allocates research, how it decides what to build, and what it chooses not to release.

“If powerful AI is coming regardless, it’s better to have safety-focused labs at the frontier than to cede that ground.”

This founding philosophy leads to a slightly uncomfortable position: Anthropic believes that powerful AI may be one of the most transformative — and potentially dangerous — technologies ever developed, and yet it continues building it. The reasoning is deliberate. If this technology is coming regardless, Anthropic would rather be the lab shaping how it develops.

Constitutional AI — a new way to train models

One of Anthropic’s most significant technical contributions is a technique called Constitutional AI. Rather than relying entirely on human feedback to shape a model’s behavior, Constitutional AI gives the model a set of principles — a “constitution” — and trains it to critique and revise its own outputs against those principles.

The result is a model that reasons about ethics and harm more consistently, and whose behavior is more transparent and auditable. Claude, Anthropic’s AI assistant, is the product of this approach.

Models trained to evaluate their own outputs against a set of principles — not just human feedback.

Research into understanding what actually happens inside neural networks — not just what comes out.

A public policy tying deployment of more powerful models to demonstrated safety benchmarks.

Mechanistic interpretability — opening the black box

Perhaps the most ambitious thread in Anthropic’s research agenda is mechanistic interpretability: the effort to understand what is actually happening inside AI models, at the level of individual circuits and computations. Most AI research treats models as black boxes — you observe inputs and outputs, but the interior remains mysterious.

Anthropic is trying to change that. If we can understand how a model reasons — not just what it produces — we stand a much better chance of catching problems before they become catastrophic.

The responsible scaling policy

In 2023, Anthropic published a Responsible Scaling Policy — a public, binding commitment that ties the development of more powerful models to demonstrated safety thresholds. If a new model exceeds certain risk benchmarks, the policy requires Anthropic to pause development or implement additional safeguards before proceeding.

This kind of self-imposed constraint is rare in any industry, let alone one as competitive as AI. It signals that Anthropic is willing to slow down when the science demands it — something few companies in Silicon Valley have been willing to say out loud.

The uncomfortable bet

None of this means Anthropic has solved the problem of safe AI. The company is honest about that. What it has done is made safety research a first-class scientific discipline — one with the same rigor and resources as capability research. In a field where the pace of progress often outstrips the ability to understand what’s being built, that matters.

What makes Anthropic different isn’t a product feature or a benchmark score. It’s a set of beliefs about what this technology is, what it could become, and what responsibilities come with building it.